The Optimism Tax

Every sales team I have ever worked with suffers from the same disease: systematic optimism. Deals that should be marked as stalled stay in the pipeline because the rep had a good feeling after the last call. Deals that are essentially dead get kept alive because removing them means admitting failure. And deals that were never real in the first place sit there padding the forecast because nobody wants to have the conversation about an empty pipeline.

I call this the optimism tax, and every company pays it. Consider the mid-market SaaS company running a $4M pipeline. If 40 percent of that pipeline is fiction — deals that will never close, deals that are stalled but nobody will admit it, deals where the prospect is actually evaluating your competitor — that is $1.6M in phantom revenue shaping real decisions. The VP of Sales hires two more reps. Marketing increases ad spend to support a growth target built on sand. Operations scales infrastructure for demand that will never arrive.

The result is predictable. Every quarter, the forecast says one thing and reality delivers 30 to 50 percent less. Leadership makes hiring decisions, investment decisions, and growth plans based on numbers that everyone quietly knows are inflated. It is the most expensive collective fiction in business.

Here is what makes it insidious: nobody is lying on purpose. Your reps genuinely believe those deals are real. Your managers genuinely believe the forecast is conservative. The optimism is baked into the human operating system, and no amount of pipeline review meetings will fix a problem rooted in cognitive bias. You cannot discipline your way out of a perception problem. You need a different lens entirely.

Why Humans Are Terrible at Deal Forecasting

This is not a character issue. It is a cognitive one. Humans are wired to weight recent positive signals more heavily than patterns of decline. A prospect who responds enthusiastically to one email gets scored as high probability, even if they have gone silent three times before. A champion who says they are taking the proposal to the board gets coded as 80 percent likely, even though four other prospects said the same thing last quarter and none of them closed.

There is a name for this in psychology: the recency bias. Your rep had a 45-minute call where the prospect was nodding along, asking great questions, even joking about implementation timelines. That single interaction overwrites three weeks of radio silence, two missed meetings, and the fact that the economic buyer has never once joined a call. The rep updates the CRM with a confident note and bumps the probability to 70 percent. In their mind, this deal is practically closed. In reality, the prospect was being polite because that is what humans do on calls.

Then there is the sunk cost problem. A rep who has invested eight weeks in a deal cannot emotionally afford to mark it as lost. Every hour spent on proposals, demos, and follow-up emails creates psychological pressure to keep the deal alive. Removing it from the pipeline feels like writing off all that effort. So the deal sits there — not progressing, not dead, just consuming space and distorting your numbers.

The fundamental problem is that humans assess deals based on feelings about conversations. AI assesses deals based on patterns across hundreds of data points: email response velocity, meeting attendance rates, stakeholder expansion or contraction, time in stage versus historical averages, and dozens of other signals that no human could track across 30 or 40 concurrent deals. Your best rep might manage a gut-level read on their top five deals. AI does it across your entire pipeline, every day, without the emotional baggage.

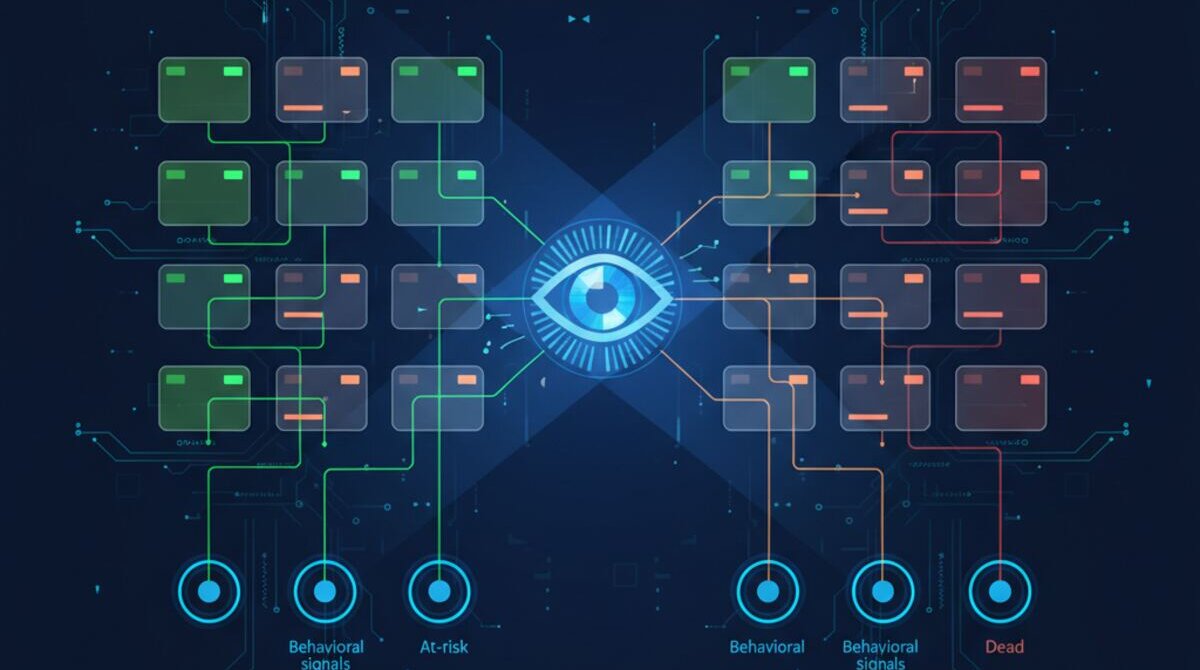

What AI Pipeline Intelligence Actually Measures

When AI analyses your pipeline, it looks at things humans cannot. It measures engagement velocity — not just whether someone responded, but how quickly, how substantively, and whether the pattern is accelerating or decelerating. A prospect who replied within two hours last month but is now taking three days to respond is sending a signal. A human might not notice that shift across 35 active deals. AI catches it instantly.

It tracks stakeholder breadth — are more people from the prospect company engaging, or has communication contracted to a single person? This is one of the strongest predictors of deal health. When a deal is moving forward, you see new names appearing on email threads: the IT director asking about integrations, the finance lead requesting pricing breakdowns, the end users asking about onboarding. When a deal is dying, the opposite happens. Communication narrows to your single champion, who is increasingly vague about next steps and timelines.

It monitors competitive signals — has the prospect been visiting competitor websites, engaging with competitor content, or mentioning other vendors? And it tracks the micro-signals that experienced sales leaders know matter but cannot systematically measure: whether the prospect is forwarding your materials internally, whether meeting invites are being accepted promptly or rescheduled repeatedly, whether the questions being asked have shifted from exploratory to implementation-focused.

Most importantly, it compares every deal against your historical patterns. If deals that close typically move from proposal to negotiation in 12 days and this deal has been sitting at proposal for 28 days with declining email engagement, the AI does not care that your rep had a great conversation last Tuesday. It flags the deal as stalling and recommends specific re-engagement actions. Not generic advice like "follow up" — targeted recommendations like "the technical stakeholder has disengaged, schedule a technical deep-dive to re-involve them" or "this deal matches the pattern of prospects who chose a competitor in Q3, consider a direct pricing conversation."

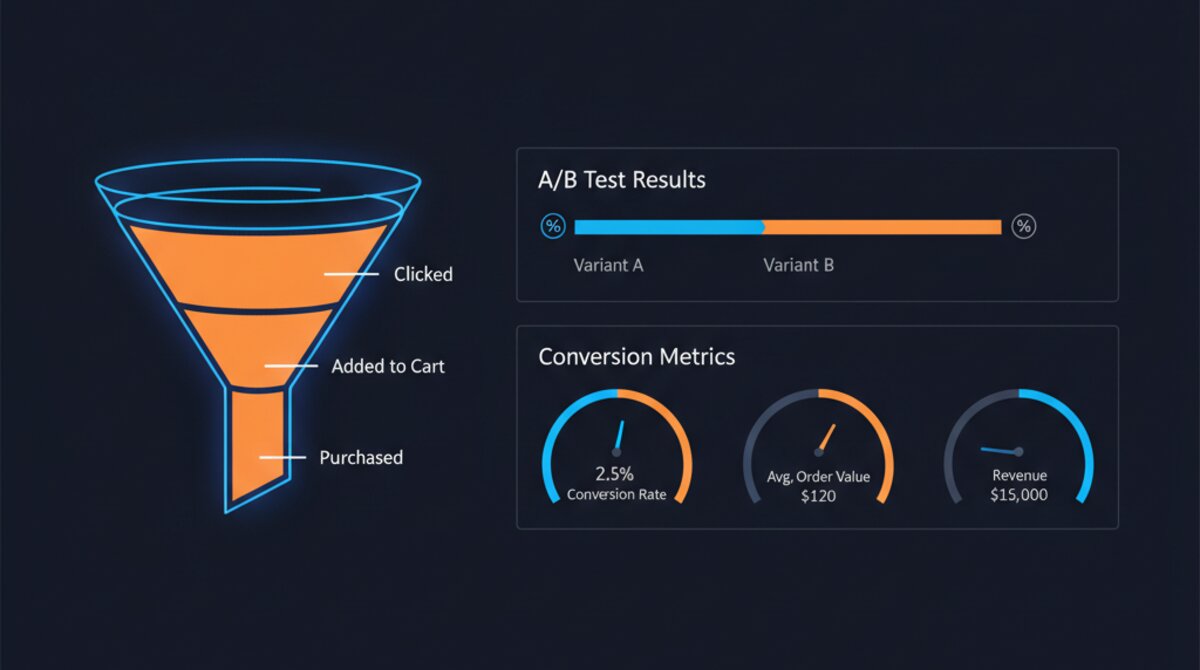

The Revenue Forecast That Actually Works

Traditional forecasting takes the pipeline value and multiplies it by the stage percentage. A $100K deal at 50 percent stage equals $50K in the forecast. This method is barely better than guessing because it assumes all deals at the same stage have the same probability of closing — which is demonstrably false.

Think about two deals sitting side by side in your CRM, both at the proposal stage, both worth $100K. Deal A: the prospect responded to your proposal within four hours, asked detailed implementation questions, introduced you to their procurement team, and scheduled a follow-up for next week. Deal B: the prospect received your proposal ten days ago, has not responded to two follow-up emails, and the last meeting was rescheduled twice before being cancelled. In a stage-based forecast, both are weighted identically. That is not forecasting. That is rounding.

AI forecasting weights each deal individually based on its actual signals. A $100K deal with high engagement velocity, expanding stakeholder involvement, and rapid stage progression might get weighted at 85 percent. A $100K deal at the same stage but with declining engagement and a champion who just changed jobs might get weighted at 15 percent. The result is a forecast that is actually based on reality, not on the fiction of uniform stage probabilities.

The downstream impact is worth quantifying. Teams using AI-weighted forecasting typically see forecast accuracy improve from the 55 to 65 percent range to 85 to 90 percent. That means your CFO can actually plan around the numbers. Your CEO can make commitments to the board with confidence. Your sales managers can identify which deals need intervention this week rather than discovering stalled deals after they have already slipped to next quarter. When your forecast reflects reality, every decision built on top of it gets better — hiring, capacity planning, marketing investment, even your negotiation posture on individual deals.

See It in Action

If your pipeline reviews feel more like therapy sessions than strategy meetings, it is time to introduce some objectivity. The gap between what your reps believe and what the data shows is not a management problem — it is an information problem. And information problems have solutions.

Watch the interactive demo to see how Ai1 analyses your pipeline and delivers deal intelligence that your reps can actually act on. Or book a walkthrough — we will show you what AI finds in your pipeline data that humans miss. Most companies discover at least 20 percent of their pipeline is stalled within the first week of analysis. That is not bad news. That is the first honest look at your revenue you have ever had.

The Real Cost of Pipeline Fiction

Let me put specific numbers on this because I think most leaders underestimate how expensive bad forecasting really is.

Consider a mid-market B2B company with a $4M pipeline and a sales team of 8 reps. Their CRM shows they should close $2.4M this quarter based on stage-weighted probabilities. Leadership plans accordingly — they approve a new hire, commit to a marketing spend increase, and sign a lease expansion for the growing team.

Reality delivers $1.6M. That is an $800,000 miss. The new hire gets delayed. The marketing budget gets clawed back mid-campaign, wasting the spend already committed. The lease is now oversized for actual needs. None of these costs show up in a "pipeline accuracy" report, but they are real and they compound quarter over quarter.

Now multiply this across a year. Four quarters of 30-40% forecast misses create a pattern of over-investment followed by panic cuts. The company lurches between aggressive hiring and quiet layoffs. Vendor commitments get made and then renegotiated at penalty rates. Strategic initiatives get started and abandoned. For a company doing $6-8M in revenue, I estimate the true cost of unreliable forecasting at $200,000-$400,000 per year in misallocated resources, wasted commitments, and opportunity cost.

The most expensive number in your business is not a cost line item. It is the gap between what your pipeline says and what actually closes.

Case Study: The Deal That Looked Perfect

Here is a deal I tracked at a client company that illustrates the problem perfectly. A $180,000 opportunity with a Fortune 500 prospect. The rep had a strong champion, three demos completed, positive feedback at every stage. In the CRM, it sat at "Negotiation" with an 80% probability. It had been there for 6 weeks.

When we ran AI analysis on the same deal, the picture was completely different. Email response times from the prospect had gone from same-day to 3-4 days over the previous month. The champion had stopped cc'ing their VP on correspondence — a signal that internal sponsorship was weakening. The prospect had visited two competitor websites in the previous 2 weeks. And the deal had been in the "Negotiation" stage 2.3 times longer than the average deal that actually closed at this company.

The AI scored the deal at 22% probability — a massive gap from the rep's 80% assessment. The sales leader initially pushed back, trusting the rep's instinct. Three weeks later, the prospect went dark. They eventually learned the company had selected a competitor who had been quietly engaged for months. That $180,000 was counted in the forecast the entire time, distorting resource allocation decisions across the quarter.

The Full Signal Set: What AI Tracks That Humans Cannot

Communication Pattern Analysis

Response velocity trends. Not just whether someone replied, but the trend over time. Is response time accelerating (good) or decelerating (bad)? A prospect who replied in 2 hours last month and now takes 2 days is showing disengagement regardless of what they say in those replies.

Thread expansion vs. contraction. In deals that close, the number of people on email threads tends to expand as the deal progresses — more stakeholders getting involved. In deals that stall, threads contract down to a single point of contact. This signal alone is one of the strongest predictors we track.

Communication substance. Are responses getting shorter and more generic? "Sounds great, let me check internally" repeated three times is not progress — it is stalling wrapped in politeness. AI detects this pattern reliably across your entire deal history.

Behavioral and Intent Signals

Meeting attendance patterns. Are more people from the prospect company attending meetings over time, or fewer? Are they sending senior people or delegating down? Both trajectory and seniority matter. Our meeting intelligence system captures these patterns automatically.

Document engagement. When you send a proposal, does it get opened once or forwarded to 4 people? AI tracks document views, time spent on specific sections, and sharing behavior to gauge genuine interest versus polite acknowledgment.

Competitive intent signals. Is the prospect researching your competitors? Attending competitor webinars? Engaging with competitor content on LinkedIn? These signals are invisible to your reps but highly predictive of deal outcomes.

Historical Pattern Matching

Stage velocity benchmarking. How long does this deal spend in each stage compared to deals that actually closed? A deal that sits in "Proposal Sent" for 3x the average is statistically unlikely to close at the current probability.

Win/loss pattern recognition. AI identifies which combinations of signals historically predict wins versus losses at your specific company, with your specific sales cycle, in your specific market. These patterns are unique to your business and impossible for humans to track across hundreds of deals.

Implementation: Getting Started with AI Pipeline Intelligence

Step 1: Connect Your Data Sources

At minimum, you need your CRM, email system, and calendar connected. Ideally, you also connect your document sharing platform and any intent data tools you use. The AI needs access to communication patterns, not just deal metadata in your CRM. Most integrations take less than an hour to set up.

Step 2: Establish Your Baseline

The AI needs 90 days of historical data to build your company-specific pattern models. During this period, it analyzes your past wins and losses to identify the signals that matter most for your particular sales cycle.

Step 3: Run Parallel Forecasts

For the first quarter, run AI forecasts alongside your traditional method. Compare the two at quarter-end. In every implementation we have done, the AI forecast has been closer to actual results.

Step 4: Integrate Into Pipeline Reviews

Once you trust the data, use AI deal scores in your weekly pipeline reviews. Focus conversations on deals where AI and rep assessments diverge most — those gaps are where the most valuable coaching happens. Your customer health monitoring can extend this same intelligence to existing accounts, catching churn risks before they materialize.

Step 5: Automate the Alerts

Configure the system to automatically flag deals where probability drops below a threshold, where engagement patterns shift negatively, or where stage duration exceeds historical norms. Your reps should wake up to a prioritized list of deals that need attention — not discover stalled deals weeks later during a review meeting.

See the Sales Pipeline Intelligence Automation in Action

Watch how Ai1 surfaces pipeline insights and risk signals with our sales pipeline intelligence automation workflow.

Explore the Automation →