The Year-One Wall

Every EOS implementation starts with fire. The leadership team is bought in, the Implementer is brilliant, Rocks are set, the L10 is running like clockwork. For the first two or three quarters, it feels transformational. And then something happens.

The L10 starts running long. Rock updates become five-word status reports instead of real progress checks. To-do completion rates drift from 90% down to 70%, then 60%. The Issues list grows but the IDS process feels rushed. Core value conversations get pushed to "next quarter." One-on-ones get cancelled because everyone is "too busy."

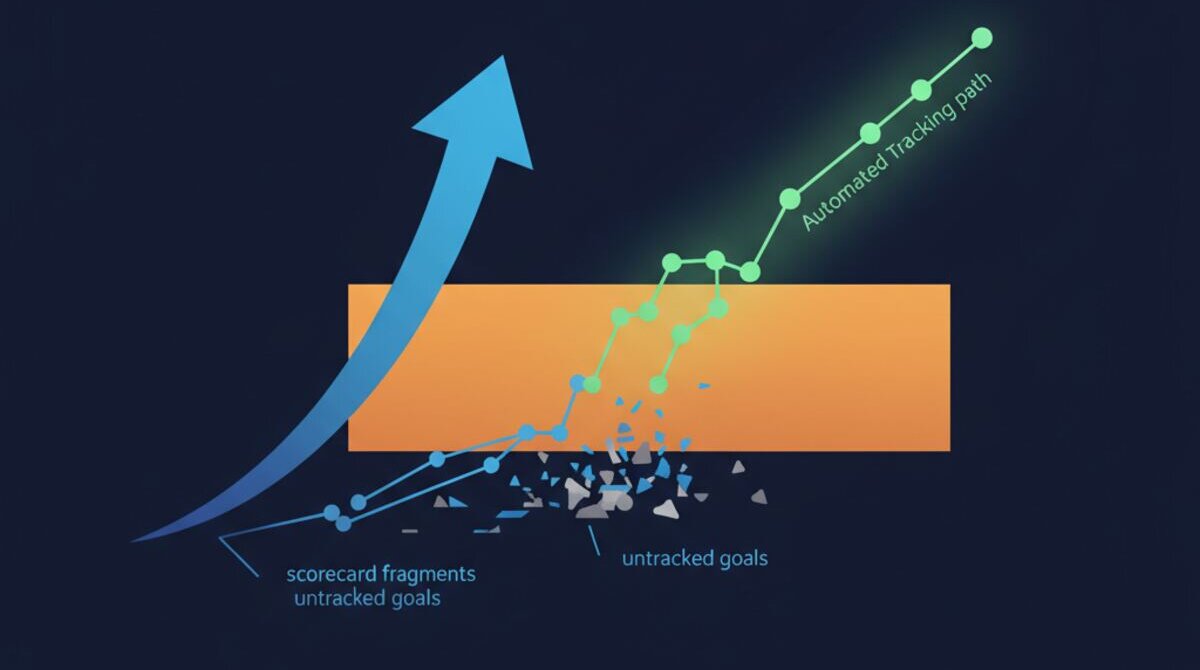

I call this the Year-One Wall, and I have watched dozens of companies hit it. The operating system itself is not the problem. EOS, Scaling Up, Rockefeller Habits — they are all excellent frameworks. The problem is that humans are terrible at sustained manual accountability. We start strong and then entropy takes over.

Why Manual Accountability Always Degrades

Think about what EOS actually asks your team to do every single week. Someone has to collect Rock status from every owner. Someone has to compile to-do completion rates. Someone has to pull scorecard numbers from five different tools. Someone has to review the IDS list for patterns. Someone has to check that one-on-ones happened and that action items were followed through.

In most companies, that "someone" is the Integrator, and they are already the busiest person on the team. So the data collection becomes rushed. Numbers get estimated instead of verified. Status reports become "I think we are on track" instead of evidence-based assessments. And gradually, the rigour that made EOS powerful in the first place fades into a weekly meeting that nobody looks forward to.

This is not a people problem. It is a systems problem. You are asking humans to do the work of a machine — collecting, aggregating, cross-referencing, and trend-analysing data across a dozen tools — and then wondering why it falls apart after a year.

What If Your EOS Data Collected Itself?

This is the core idea behind AI-powered EOS accountability. Instead of relying on people to manually report on Rocks, to-dos, meetings, and values, an AI agent connects to your existing tools — Asana, Monday, Ninety.io, Google Sheets, Slack, your calendar — and pulls the data automatically.

Not estimated data. Not self-reported data. Actual, verified, cross-referenced data pulled directly from the systems where work actually happens.

Your marketing lead says their Rock is "on track"? The AI checks Asana and sees that three of five milestones are overdue. Your sales director reports all to-dos complete? The AI verifies against the actual task records. Your L10 ran 90 minutes last week? The AI flags that it was supposed to be 60 and shows you the trend line over the last eight weeks.

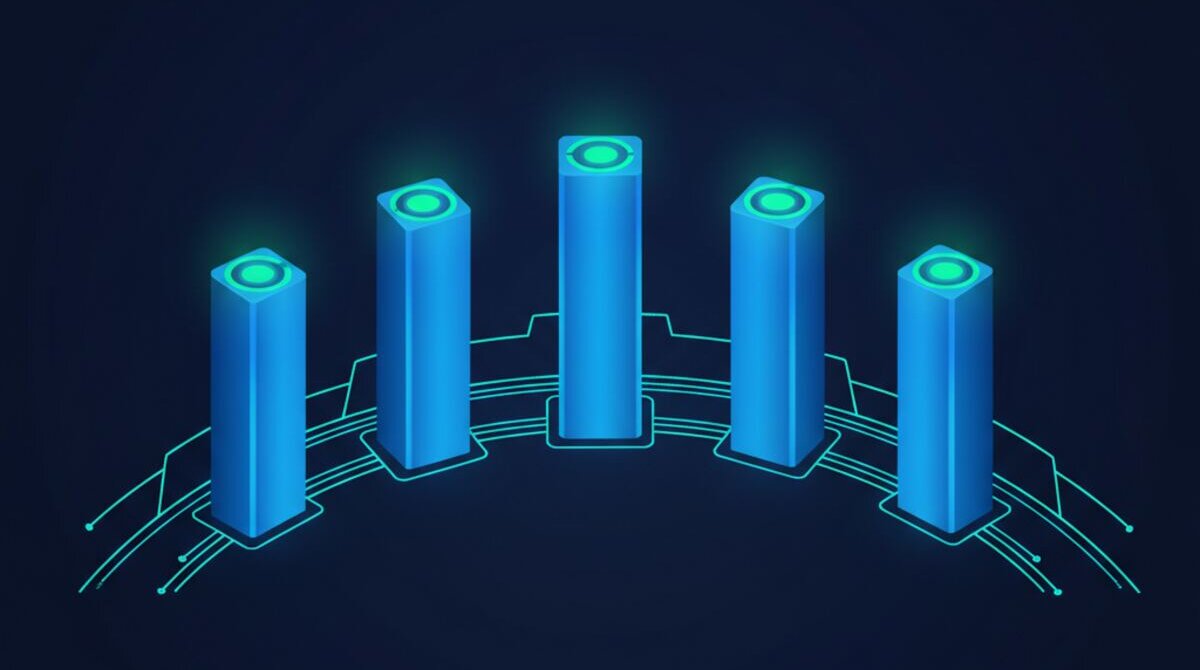

The Six Pillars of Automated EOS Tracking

Our Ai1 EOS Accountability automation monitors six interconnected areas that together give you a complete picture of your operating system health.

Rock Progress. Every Rock is tracked by owner with milestone completion, velocity trends, and projected finish dates. The AI does not just tell you a Rock is behind — it tells you by how much, why, and what needs to change this week to get it back on track.

L10 Meeting Health. Attendance tracking, duration trends, to-do completion rates from meeting to meeting, and IDS resolution velocity. You will know instantly whether your meetings are getting tighter or sloppier.

Roadblock Analysis. The AI cross-references your IDS issues list over time and identifies recurring patterns. If the same department keeps surfacing the same type of problem quarter after quarter, the AI flags it as a systemic issue — not just another item to discuss.

Core Value Alignment. Instead of a vague quarterly conversation about who is living the values, the AI analyses peer recognition patterns, 360 feedback data, and communication sentiment to produce data-driven core value scores for every team member.

One-on-One Effectiveness. Are one-on-ones actually happening? Are action items being followed through? Are development goals progressing? The AI tracks cadence adherence, completion rates, and coaching quality indicators so leaders can see who is investing in their people and who is going through the motions.

Quarterly Planning Prep. When the quarterly session arrives, the AI has already compiled a complete retrospective — Rock outcomes, scorecard trends, IDS patterns, team health indicators — packaged into a review deck that used to take your Integrator an entire day to build.

The Accountability Multiplier Effect

Here is what I have observed in companies that move to automated EOS tracking: accountability does not just maintain — it accelerates. When people know that Rock progress is being measured objectively, not self-reported, behaviour changes overnight. The sandbagging stops. The "I forgot to update my status" excuse disappears. And the L10 meeting transforms from a status review into a genuine problem-solving session.

Your Integrator gets two to three hours back every week. Your L10 drops from 90 minutes back to 60 because the data is already compiled. Your quarterly planning sessions start with a complete picture instead of scrambled last-minute data pulls. The operating system starts operating the way it was designed to.

What the AI Catches That Humans Miss

Manual tracking is binary: a Rock is on track or off track. AI tracking is dimensional. It sees that a Rock is technically on track but decelerating — the first three milestones were completed ahead of schedule, but the fourth is dragging, and at current velocity it will miss the deadline by two weeks. That is actionable intelligence you never get from a spreadsheet.

The AI also spots correlations across pillars. When one-on-one cadence drops in a department, to-do completion rates in that same department typically follow within two weeks. When L10 attendance becomes inconsistent, IDS resolution velocity degrades. These cross-pillar patterns are invisible in manual tracking but obvious to an AI that is watching everything simultaneously.

And perhaps most importantly, the AI removes the politics from accountability. When a human delivers tough news about someone's Rock being behind, it can feel personal. When an AI report shows objective data from the tools everyone agreed to use, the conversation shifts from blame to problem-solving. It is not "Sarah says you are behind." It is "the data shows milestone four is two weeks overdue and here are three options to close the gap."

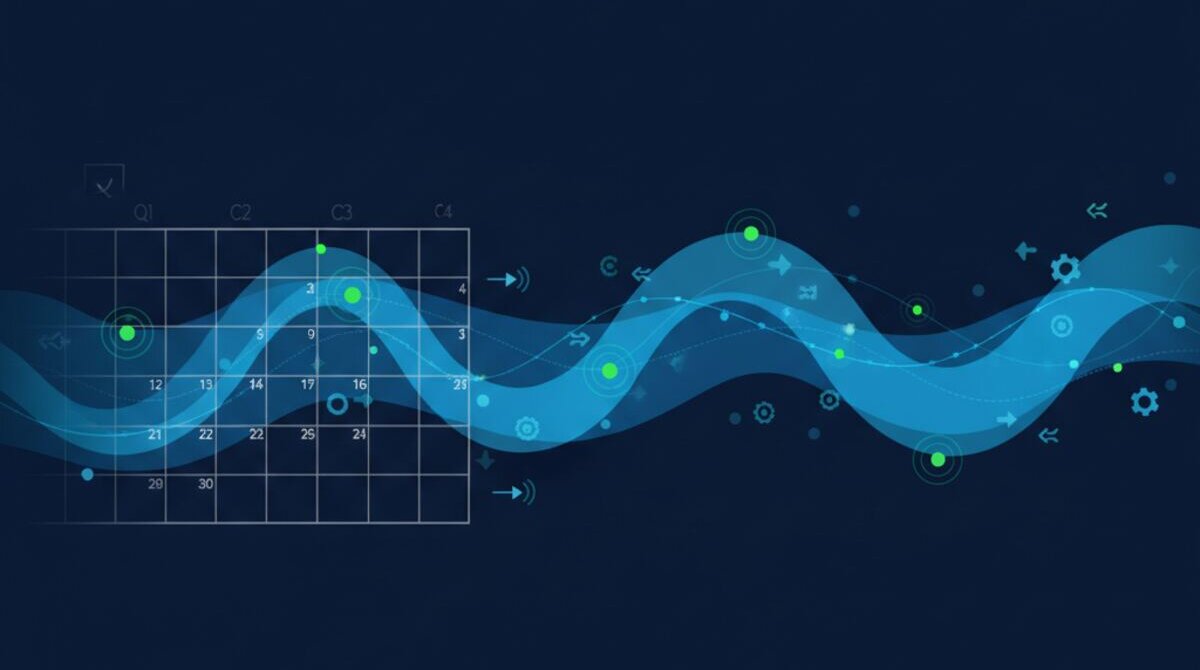

From Quarterly Check-Ins to Continuous Improvement

Traditional EOS runs on a quarterly cadence with weekly L10 touchpoints. That model works, but it means you often do not catch problems until they have been compounding for weeks. An AI-powered accountability system can run weekly reports, flag anomalies in real time, and even send proactive alerts when metrics cross threshold boundaries.

Imagine getting a Slack message on Tuesday saying: "Heads up — sales team to-do completion dropped to 62% this week, down from 85% average. Three items from last L10 are overdue. One Rock milestone was pushed back without explanation." That is not micromanagement. That is an early warning system that lets you address issues before they become quarterly surprises.

Breaking Through the Year-One Wall

The companies that get the most from EOS are the ones that maintain discipline over years, not quarters. But maintaining that discipline manually is exhausting. It relies on the Integrator's energy, the team's willingness to self-report honestly, and the Visionary's patience with process — three things that naturally degrade over time.

AI does not get tired. It does not sandbag. It does not forget to update its status. It does not cancel one-on-ones because it had a busy week. It just watches, measures, analyses, and reports — consistently, accurately, and without politics.

That is how you break through the Year-One Wall. Not by trying harder, but by building a system that makes accountability automatic.

See It in Action

We built an interactive demo that shows exactly how Ai1 connects to your EOS tools and produces a complete accountability report. You can watch the AI pull Rock data from Asana, analyse L10 meeting patterns, score core value alignment, and deliver findings in a comprehensive report — all in under five minutes.

Watch the EOS Accountability demo →

See the EOS Accountability Automation in Action

Watch how Ai1 keeps your EOS team on track with our EOS accountability automation workflow.

Explore the Automation →