I want you to do something right now. Open Google PageSpeed Insights in another tab, paste in your website URL, and run the test on mobile. I’ll wait.

If you’re like most business owners I talk to, the number that comes back is going to surprise you. And not in a good way.

The last time you checked your site speed was probably when you launched the site. Or maybe after a redesign. Since then? Nobody looked. And while nobody was looking, your site got slower. One tracking pixel at a time. One WordPress plugin at a time. One unoptimized hero image at a time.

That’s the problem I want to talk about today. Not the performance itself, but the silence. The fact that performance degrades invisibly, and by the time anyone notices, the damage is already done.

The silent regression problem

Here’s what nobody tells you about website performance: it doesn’t break. It erodes.

A developer adds a new chat widget. Your marketing team installs a heat mapping tool. Someone uploads a 4MB product photo without compressing it. Your CMS pushes an update that adds 200KB of JavaScript you didn’t ask for. Each change is small. Each one is invisible in isolation.

But they stack. Over six months, your mobile performance score drops from 85 to 52. Your Largest Contentful Paint goes from 1.8 seconds to 4.2 seconds. Your Total Blocking Time triples.

And you have no idea, because nothing broke. The site still loads. It just loads slower. Slow enough that Google notices. Slow enough that visitors leave before the page finishes rendering.

53% of mobile users abandon a site that takes longer than 3 seconds to load. That’s not my number. That’s Google’s, from their own research. Every second of additional load time increases your bounce rate by 32%.

So the question isn’t whether your site is getting slower. It almost certainly is. The question is whether you’re going to know about it before your customers do.

Why Core Web Vitals matter more than you think

In 2021, Google started using Core Web Vitals as a ranking signal. That means your site’s performance directly affects where you show up in search results. Not indirectly. Not as a tiebreaker. As a real, measurable ranking factor.

The four metrics that matter most are:

Largest Contentful Paint (LCP) measures how long it takes for the biggest visible element on your page to load. Google wants this under 2.5 seconds. Anything over 4 seconds is considered poor.

First Contentful Paint (FCP) measures how quickly the first piece of content appears. This is the user’s first visual confirmation that the page is actually loading.

Total Blocking Time (TBT) measures how long JavaScript blocks the main thread, preventing the user from interacting with the page. You know that feeling when you tap a button and nothing happens? That’s TBT at work.

Cumulative Layout Shift (CLS) measures visual stability. When elements jump around as the page loads, that’s layout shift. It’s the reason you accidentally click the wrong link when a banner ad pushes the content down.

These four metrics aren’t abstract technical measurements. They directly correlate with whether a visitor stays on your site, engages with your content, and converts into a customer.

Vodafone improved their LCP by 31% and saw an 8% increase in sales. NDTV reduced their LCP by 55% and saw a 50% reduction in bounce rate. These aren’t marginal gains. These are business outcomes that show up in revenue.

The “set it and forget it” myth

I hear this constantly from business owners: “We optimized our site speed when we launched. We’re good.”

No. You were good. On launch day.

Web performance is not a one-time project. It’s a daily discipline. Your site is a living system. Every change you make, every third-party script you add, every content update shifts the performance baseline.

Performance isn’t a one-time fix. It’s a daily habit. The sites that win are the ones that catch regressions before Google does.

Think about it like fitness. You don’t go to the gym once and stay in shape forever. You have to keep showing up. And more importantly, you have to measure. If you stopped weighing yourself for six months, you wouldn’t be shocked to find the number changed.

Your website works the same way. Without regular measurement, entropy wins. Performance drifts. And because nobody is watching the numbers, nobody sounds the alarm.

The businesses that maintain fast sites aren’t the ones with the best developers. They’re the ones with the best monitoring.

What daily automated monitoring actually looks like

When I explain our PageSpeed Monitor to clients, the first thing they ask is: “What do I have to do every day?”

Nothing. That’s the point.

Every morning, the system runs Google PageSpeed Insights against your site for both mobile and desktop. It pulls the performance score, all four Core Web Vitals, and the specific recommendations Google generates for improvement.

All of that gets logged automatically to a Google Sheet. One row per day, per device type. Over time, you build a historical record of your site’s performance that shows trends, patterns, and the exact dates when regressions happened.

If your mobile score drops below 50 out of 100, the system fires a Slack alert immediately. No waiting for your next monthly check-in. No hoping someone remembers to run a test. You know the same day.

The alert includes the current score, the specific metrics that degraded, and actionable recommendations from the PageSpeed API about what to fix. Not vague suggestions. Specific instructions: compress this image, defer this script, remove this unused CSS.

That’s what shifts performance from a reactive problem to a proactive practice. You stop finding out about speed issues from your SEO agency’s quarterly report. You find out the morning after the problem appears.

The cost of waiting for quarterly reports

Most businesses that monitor performance at all do it through their SEO agency or marketing team. They run a PageSpeed test once a month, maybe once a quarter, as part of a larger report.

Here’s the math on why that doesn’t work.

Let’s say a developer pushes a change on January 15 that adds a heavy JavaScript library to your site. Your mobile score drops from 78 to 41. Your next SEO report comes in on March 1. That’s 45 days of degraded performance before anyone even knows about it.

During those 45 days, Google has re-crawled your site multiple times with the new, slower scores. Your rankings have started to slip. Mobile users are bouncing at higher rates. If you get 10,000 monthly mobile visitors and your bounce rate increased by 15%, that’s 1,500 lost visitors per month. At a 2% conversion rate and $200 average order value, that’s $6,000 in lost revenue. Per month.

With daily monitoring, you know about that same regression on January 16. The developer fixes it by January 17. Total exposure: two days instead of 45.

The ROI on automated monitoring isn’t theoretical. It’s the difference between catching a problem in 24 hours and catching it in 45 days.

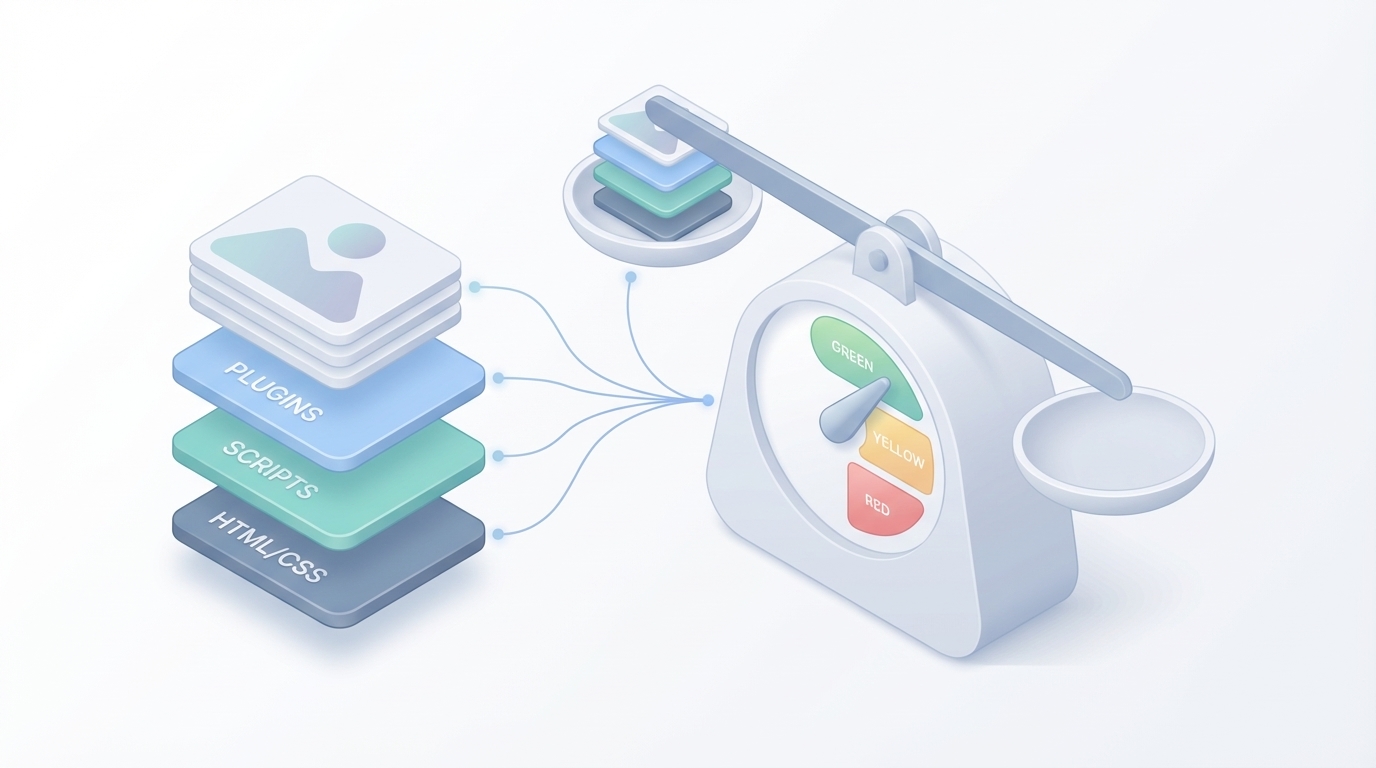

What causes performance regressions (the usual suspects)

After running daily PageSpeed checks across dozens of sites, I see the same patterns over and over.

Plugin and CMS updates. WordPress core updates, plugin updates, and theme updates routinely add JavaScript, change render paths, or introduce new DOM elements. A single WooCommerce update once added 180KB of unminified JavaScript to every page load on a client’s site.

Third-party scripts. Chat widgets, analytics tools, A/B testing platforms, retargeting pixels. Each one adds load time. Most business owners have no idea how many third-party scripts are running on their site. I’ve audited sites with 14 different tracking scripts on a single page.

Unoptimized images. Someone on the content team uploads a 5MB PNG straight from their phone. It renders at 400 pixels wide but the browser downloaded a 4000-pixel-wide file. This is the most common performance killer I see, and it happens silently because the image still displays correctly.

Render-blocking resources. CSS and JavaScript files that load in the head of the document without async or defer attributes. Every one of these files blocks rendering until it finishes downloading. The more you add, the longer users stare at a blank screen.

Font loading. Custom web fonts that block text rendering while they download. Users see a flash of invisible text, or the entire layout shifts when the font finally loads. Both are bad for user experience and both hurt your CLS score.

None of these are malicious. They’re the natural result of a website being actively used and maintained. But without monitoring, they accumulate until your site is noticeably slow.

Mobile vs. desktop: the gap nobody talks about

Here’s something that surprises almost every client I work with. Their desktop PageSpeed score is usually 85 or higher. Their mobile score? Often below 50.

The gap exists because mobile testing simulates a mid-range phone on a 4G connection. Your site might load in 1.5 seconds on your MacBook Pro with gigabit fiber. On a Samsung Galaxy A14 on T-Mobile, it takes 6 seconds. Google tests against that reality, not yours.

And here’s the kicker: over 60% of web traffic is now mobile. For most local businesses, that number is closer to 70%. If your mobile score is poor, you’re delivering a bad experience to the majority of your visitors.

Our PageSpeed Monitor runs both mobile and desktop tests every day. You see both scores side by side. You catch mobile-specific regressions that wouldn’t show up in a desktop-only test. And since Google moved to mobile-first indexing, your mobile score is the one that determines your search rankings.

Building a performance culture, not just a performance report

The real value of daily monitoring isn’t the data. It’s the behavior change it creates.

When your team knows that every change gets measured the next morning, they start thinking about performance before they push code. The developer asks: “Will this script affect load time?” The content editor checks image file sizes before uploading. The marketing team evaluates whether a new tracking pixel is worth the performance cost.

That’s the shift from reactive to proactive. From “fix it when it breaks” to “don’t let it break.”

The Google Sheet becomes a shared reference point. When someone proposes adding a new chat tool, you can look at the performance log and say: “The last time we added a similar tool, our mobile score dropped 12 points. Let’s make sure this one loads async.” That’s a different conversation than you would have had without the data.

Performance culture doesn’t require performance engineers. It requires visibility. Make the numbers visible, and people naturally start caring about them.

What this costs (almost nothing)

The Google PageSpeed Insights API is free. Google gives you 25,000 queries per day at no charge. For a single URL monitored twice daily (mobile and desktop), that’s 730 queries per year. You could monitor 34 URLs every single day and still not hit 1% of the free quota.

The only variable cost is the AI analysis layer that interprets the results and generates contextual recommendations. That runs a few cents per check. For a typical setup monitoring one to three URLs, the total monthly cost is under $5.

Compare that to performance monitoring SaaS tools. Most charge $30 to $200 per month for similar functionality. Some enterprise tools charge $500 or more. And many of those tools still require you to log in to a dashboard to see the results, instead of pushing alerts to where you already work.

The automation we built runs on tools you already have: a Google Sheet for logging, Slack for alerts, and the free PageSpeed API for data. There’s no new vendor to manage, no new login to remember, and no annual contract to negotiate.

The compounding value of historical data

After 30 days of monitoring, you have a trend line. After 90 days, you have a story. After a year, you have a decision-making tool.

Historical performance data lets you answer questions that snapshots never could. When did our mobile score start declining? What change caused that drop in March? Is our overall trend improving or degrading? How did the site redesign actually affect performance?

It also gives you ammunition for hard conversations. When a stakeholder wants to add yet another tracking script, you can show them the cumulative impact of the last five scripts on your performance score. Data beats opinions in every meeting.

The Google Sheets log creates this history automatically. No manual data entry. No copying and pasting from PageSpeed Insights. Just a clean, timestamped record that grows more valuable every day it runs.

See the PageSpeed Monitor Automation in Action

Watch how Ai1 monitors your site performance daily with our PageSpeed monitor automation workflow.

Explore the Automation →